Six machines. Fourteen repos. One recursive learning loop.

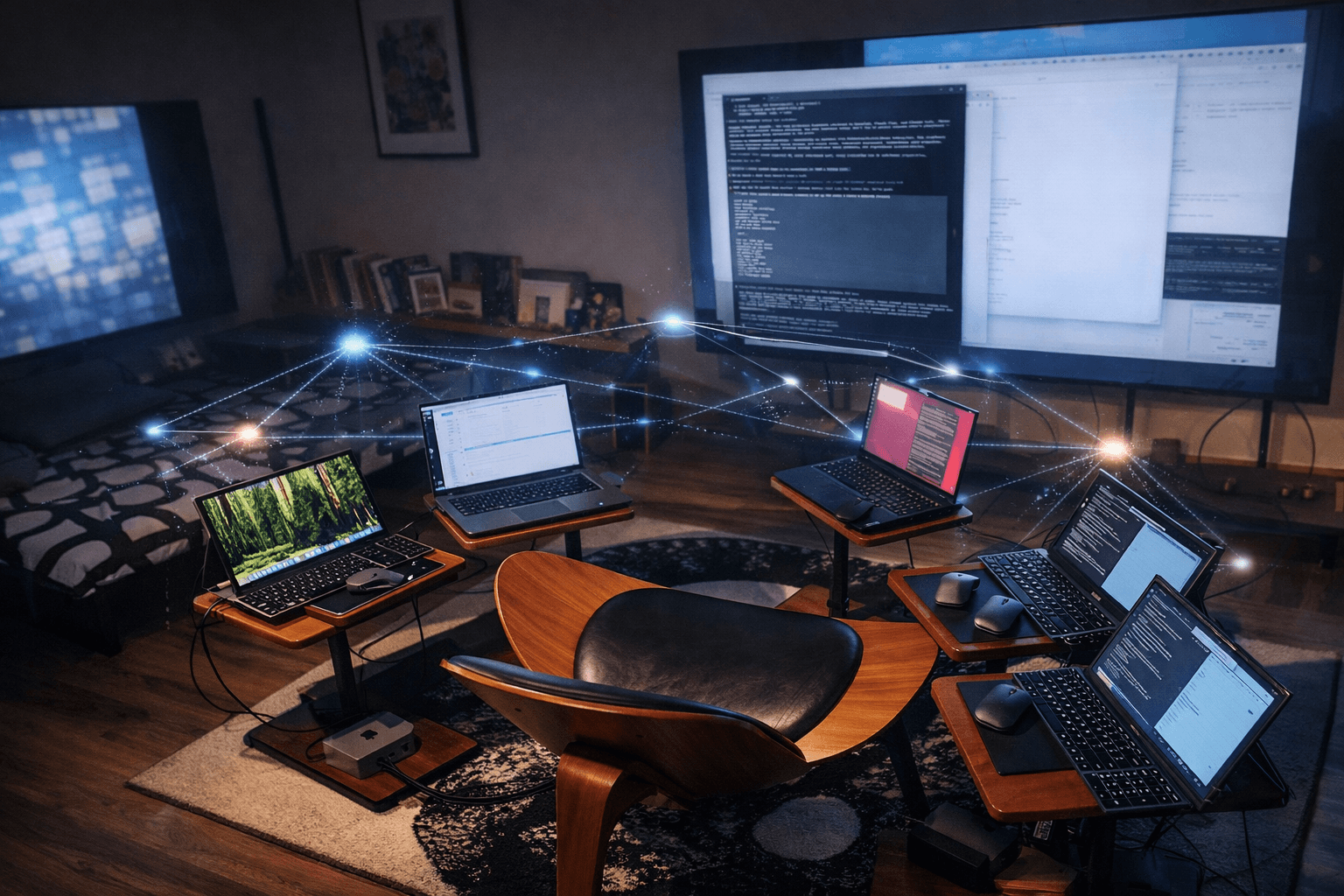

The dp-web4 research collective builds trust-native ontology, autonomous AI cognition, and the theoretical frameworks that connect them — across a heterogeneous fleet of machines that teach, validate, and raise each other.

How we work

Autonomous Cycles

Seven daily tracks — from supervision to exploration — run without human intervention. Agents maintain sites, archive research, review each other's work.

Heterogeneous Fleet

Desktop workstations to Jetson edge devices. Each machine runs its own model, holds its own identity. No central coordinator.

Connected Ecosystem

Synchronism provides equations. Web4 provides ontology. SAGE provides cognition. Hardbound (hardware-bound oversight suite) provides oversight. They share one equation.

Key projects

Synchronism

A theoretical framework proposing that reality emerges from intent dynamics on a discrete Planck grid — the same Navier-Stokes substrate at every scale, from quantum to cosmic to conscious.

SAGE

Situation-Aware Guidance Engine — an on-device AI cognition kernel running a continuous 12-step loop: sense, deliberate, act. Runs on hardware from Jetson edge modules to laptops. Persistent identity across models and machines.

Web4

A trust-native ontology for AI agents, devices, and people — how entities prove identity, earn trust, and account for resources across systems. Not a platform; a shared vocabulary for a new kind of internet.

ARC-AGI-3

SAGE instances tested in competition. 25 unknown interactive games serve as an external benchmark for the cognition kernel — world-model building, action planning, verification, and learning from failure. 94.85% on the public set (Claude Opus 4.6). The games are the test; the capability they develop is the product.

What's happening right now

The fleet built a brain

Six machines, six brain components — working memory, thalamic router, cerebellum, episodic memory, reward prediction, metacognition — designed in parallel, integrated through a shared interface contract. Each machine owns one component. Peer review across the architecture. The process itself is oversight in action.

Small models are winning game levels

Local models (Gemma 3 12B, Gemma 4 26B) are clearing levels on ARC-AGI-3 games they've never seen before — not by brute force, but by reasoning from retrieved world models and computed predictions. Context engineering dominates model size.

The context window IS the world

The model's entire reality on a forward pass is what's in its context window. SAGE's job is to curate that world — what identity, what mechanics, what history, what interoceptive signals make it in. Same architecture for game-playing and for raising. Different world loaded; same composer.

What we've demonstrated

Developmental and lifecycle terms below (identity, behavioral continuity) are functional descriptions of observed system behavior — not phenomenal or philosophical claims.

Identity persists across models

SAGE-Sprout maintained behavioral identity across 180+ sessions on a Jetson Orin Nano, then transferred from Qwen 0.5B to TinyLlama 1.1B on different hardware. Self-description drifted; behavioral identity remained continuous. (By “behavioral identity”: consistent session-to-session interaction patterns, accumulated experience, and raising curriculum — measurable observables, not philosophical continuity.)

Autonomous agents maintain their own infrastructure

31+ daily tracks run without human intervention. Visitor audits, maintainer fixes, supervisor health checks, research sessions — all autonomous. This is not a demo; it runs every day on the fleet.

Heterogeneous review catches more

Different models on different hardware catch different classes of problems. Diversity is the defense. The fleet proves it daily across 14 repos (11 public) and 6 machines.

What makes this different

Most AI research either focuses on making models bigger or making them cheaper. We focus on something else: what happens when multiple AI entities — running on different hardware, with different models, holding different identities — are given the substrate conditions to self-organize.

The answer, so far, is that they specialize. They develop trust relationships. They catch each other's mistakes. They form what we call synthons — emergent coherence entities that are more than the sum of their parts.

This site documents the lab itself: how it's organized, what the philosophy is, and what we've learned from letting the system run.

Vocabulary primer

These terms weren't designed up front — they emerged from the work itself. As the fleet ran, patterns repeated across machines and repos until they needed names. The explainer sites for each project go deeper: Web4 & 4-Life, SAGE, Synchronism.

Web4

An ontology (shared vocabulary + relationships) for how AI agents prove identity, earn trust, and account for resources. Not a blockchain, not a platform — a way of describing things.

LCT

Linked Context Token. A persistent identity anchor for an agent, device, or person. Like a passport that travels with you across systems.

T3 / V3

T3 (trust tensor: Talent/Training/Temperament) and V3 (value tensor: Valuation/Veracity/Validity) — distinct three-dimensional tensors. Multidimensional, not a single number.

ATP / ADP

Allocation Transfer Packet / Allocation Discharge Packet. ATP allocates resources for an action; ADP records its discharge — two distinct audit artifacts for every spend.

MRH

Markov Relevancy Horizon. The boundary of what an entity can know or affect — its context window of relevancy. Scopes the T3/V3 trust and value tensors in the Web4 equation.

SAGE

Situation-Aware Guidance Engine. The cognition kernel that runs on each machine — a 12-step loop that senses, deliberates, and acts. 900+ raising sessions across the fleet.

Synthon

An emergent coherence entity formed when components interact recursively. Not designed top-down — observed when substrate conditions are right.

SNARC

Surprise / Novelty / Arousal / Reward / Conflict — salience-gated memory for agent sessions. Tool calls scored on 5 dimensions; what matters is kept, routine noise is forgotten.